Open Source First: How to Build Enterprise Software Without Paying for Everything

Open Source Linux Cost Optimisation Enterprise Architecture Enterprise software licensing costs are one of the largest and least scrutinised line items in IT budgets. Oracle databases, VMware virtualisation, Microsoft productivity suites, SAP ERP — the cumulative cost of proprietary software licenses can consume 30-40% of an IT department’s annual budget. Open source alternatives exist for almost every category of enterprise software. DeepTechComputing has helped clients save millions in licensing costs by replacing proprietary software with open-source equivalents, without sacrificing reliability, performance, or support. The open source misconception The most persistent myth about open source software is that ‘free’ means ‘unsupported’ or ‘risky.’ This was true two decades ago. It is not true today. Linux powers 96% of the world’s top 1 million web servers. PostgreSQL handles the transactional workloads of companies like Instagram and Apple. Kubernetes — originally built at Google — runs production workloads at Netflix, Airbnb, and Spotify. Nginx processes more HTTP requests globally than any other web server. These are not science experiments. They are the backbone of global digital infrastructure. The open source stack that replaces proprietary enterprise software Here is a practical mapping of common proprietary enterprise tools to their open source equivalents: The true cost of open source Open source software has real costs — just not licensing costs. The honest accounting: For most organisations, these costs are substantially lower than the licensing fees they replace. The break-even point is typically 12-18 months after migration. The question is not ‘can we afford to use open source?’ It is ‘can we afford to keep paying for proprietary software when open alternatives are this mature?’ Linux consulting: the foundation of the open stack Linux is the substrate on which the entire open source stack runs. Organisations that invest in deep Linux expertise — kernel configuration, performance tuning, security hardening, containerisation — gain a compounding advantage over time. This is core to what DeepTechComputing does. Our Linux consulting practice helps organisations: Starting your open source journey The most effective migrations are incremental. We recommend starting with infrastructure tooling — monitoring, log management, CI/CD — where the blast radius of a problem is limited to internal teams. Once your organisation has built confidence with open source operations, extend to databases, then application platforms. The journey to an open-source-first architecture takes 18-36 months for a mid-sized enterprise. The organisations that begin now will have a structural cost advantage — and dramatically more architectural flexibility — over those that delay. DeepTechComputing provides end-to-end support for this transition: assessment, architecture design, migration execution, and ongoing operations. If you’re ready to start the conversation, reach out to our team in Bhubaneswar.

Linux at the Edge: Building Embedded Systems That Last

Embedded Systems Linux Kernel RTOS Edge Computing Most people interact with Linux dozens of times a day without knowing it — through their routers, smart TVs, industrial controllers, medical devices, and in-vehicle infotainment systems. Embedded Linux powers the edge computing revolution, and getting it right requires a depth of expertise that’s rare in India’s technology landscape. DeepTechComputing has built embedded Linux systems for Comcast’s set-top box platform and mission-critical defence applications for Thales. Here’s what we’ve learned. What makes embedded Linux different Desktop and server Linux distributions are designed for general-purpose computing: large storage, significant RAM, internet connectivity, a keyboard and screen. Embedded Linux operates under fundamentally different constraints: These constraints require a fundamentally different approach to kernel configuration, BSP (board support package) development, and system integration. The build system: Yocto vs Buildroot Two build systems dominate embedded Linux development: Yocto Project and Buildroot. Buildroot excels for simpler systems where you want a minimal, reproducible image built quickly. It has a shallow learning curve and produces small, clean root filesystems. For products with limited scope and a small team, Buildroot is often the right choice. Yocto is the industrial-grade solution. It’s a complete framework for building custom Linux distributions, with sophisticated layer management, recipe inheritance, and the ability to generate SDK toolchains for application developers. It’s more complex, but the investment pays off for larger teams and products that need to support multiple hardware variants. At DeepTechComputing, we’ve deployed both in production. Our recommendation depends on the project’s scope, team size, and expected hardware variability. A well-configured embedded Linux system should boot in under two seconds and occupy less than 50MB of flash. If you’re not hitting those targets, your configuration needs work. Real-time Linux: PREEMPT_RT and beyond Standard Linux is not a real-time operating system. Its scheduler optimises for throughput, not latency. For applications requiring deterministic response times — industrial motion control, audio processing, robotics — this matters enormously. The PREEMPT_RT patch set converts Linux into a fully preemptible kernel, dramatically reducing worst-case interrupt latency. Since kernel 6.12, much of PREEMPT_RT has been merged into the mainline kernel, making real-time Linux dramatically more accessible. For applications with stricter requirements, a dual-kernel approach (Xenomai or RTAI) or a dedicated RTOS (FreeRTOS, Zephyr) running alongside Linux on a heterogeneous processor provides hard real-time guarantees. Security hardening for embedded systems Embedded systems are increasingly networked and increasingly targeted. The security practices that protect server infrastructure apply at the edge too, often with greater urgency because devices are deployed in physically accessible locations. Essential hardening measures: The long-term maintenance problem Perhaps the biggest challenge in embedded Linux development is maintenance. Products ship and spend years in the field. Security vulnerabilities are discovered. Customers want new features. Hardware components are discontinued.The teams that manage this best invest early in: a stable LTS kernel base (not the latest shiny version), rigorous automated testing on hardware, and clear policies for backporting security fixes versus full kernel upgrades.

DevOps in 2025: Beyond CI/CD Pipelines

DevOps Kubernetes Platform Engineering CI/CD When most engineering teams hear ‘DevOps,’ they think pipelines — automated builds, tests, and deployments. And yes, CI/CD is foundational. But modern DevOps in 2025 is about something broader: building internal platforms that make developers fast, safe, and self-sufficient. This shift — from DevOps as a set of tools to DevOps as a product philosophy — is what separates high-performing engineering organisations from the rest. The evolution: from CI/CD to platform engineering Traditional DevOps thinking treats infrastructure as a shared responsibility between developers and operations. Platform engineering takes this further: the infrastructure, tooling, and developer experience become a product, built and maintained by a dedicated team, consumed by developers as a self-service capability. Leading technology companies — Spotify, Airbnb, Shopify — pioneered this model. The tools have now matured to the point where any serious engineering organisation can adopt it. The Kubernetes question Kubernetes has become the de facto standard for container orchestration. But for many organisations, running and managing Kubernetes clusters is more overhead than value. The ecosystem has evolved accordingly: At DeepTechComputing, we’ve deployed Kubernetes environments for clients ranging from defence technology firms to e-commerce platforms. The key insight: Kubernetes is not a destination, it’s an enabling layer. What you build on top of it is what matters. GitOps: the practice every team should adopt GitOps is simple in principle: everything — application code, infrastructure definitions, configuration — lives in Git. Changes to production happen only through pull requests, reviewed by a human, and applied automatically by a controller running in the cluster. The benefits are substantial: Infrastructure is code. Treat it with the same discipline — reviews, testing, version control — as your application code. Observability: the missing piece Many teams invest heavily in deployment automation and neglect observability. This is backwards. You cannot run a reliable system you cannot see. Modern observability rests on three pillars: Tools like Prometheus, Grafana, Jaeger, and the OpenTelemetry standard have made world-class observability accessible to teams of any size. There’s no excuse for flying blind in 2025. Security left: shifting security into the pipeline DevSecOps — integrating security into the development pipeline rather than applying it at the end — is no longer optional. With SAST (static analysis), DAST (dynamic analysis), container image scanning, and secrets management integrated into CI/CD, vulnerabilities are caught in development rather than production. Our recommended toolchain: Trivy for container scanning, HashiCorp Vault for secrets management, SonarQube for static analysis, and OWASP ZAP for dynamic testing.

Generative AI in the Enterprise: Cutting Through the Hype

Generative AI LLMs Machine Learning Enterprise Tech Generative AI has gone from a research curiosity to a boardroom priority in less than two years. Every enterprise wants a ‘ChatGPT for our data.’ Most don’t know what that actually means — or what it costs to build responsibly. This blog cuts through the noise and gives you a practical framework for evaluating, building, and deploying generative AI in a real enterprise context. What generative AI actually is (and isn’t) Large language models like GPT-4, Claude, and Mistral are trained on vast text corpora to predict the next token in a sequence. This simple mechanism produces remarkably useful outputs: writing, summarisation, code generation, question answering. What they are not: databases. They do not ‘know’ your internal documents unless you provide that context, either through fine-tuning or retrieval-augmented generation (RAG). They hallucinate — confidently producing plausible-sounding but incorrect information. And they are not deterministic — the same question can produce different answers on different runs. Understanding these limitations is the foundation of responsible enterprise AI deployment. The four patterns of enterprise AI adoption In our work with clients, we see four common patterns: Of these, document Q&A and code assistance deliver the fastest, most measurable ROI and carry the lowest risk. Customer-facing chatbots require significantly more investment in safety, testing, and monitoring. RAG vs fine-tuning: which should you use? The most common question we get from enterprise clients: should we fine-tune a model on our data, or use RAG? For most use cases, RAG is the right answer. Here’s why: fine-tuning is expensive (requires labelled data, GPU time, and ongoing maintenance), and it bakes knowledge into model weights in a way that’s hard to update. If your policy documents change monthly, you don’t want that knowledge permanently encoded in a fine-tuned model. RAG — retrieval-augmented generation — keeps your knowledge in a vector database. When a user asks a question, relevant chunks are retrieved and passed to the LLM as context. Updates are as simple as re-indexing a document. It’s cheaper, faster to build, and easier to maintain. Fine-tuning makes sense when you need the model to behave differently — adopting a specific communication style, mastering a domain-specific vocabulary, or generating structured outputs in a precise format. Don’t build a generative AI product. Build a generative AI system — with guardrails, monitoring, and human oversight baked in from the start. The safety and compliance layer you can’t skip Enterprise AI deployments must address three areas that most vendors gloss over: At DeepTechComputing, we build AI systems with these considerations at the architecture level — not bolted on as an afterthought. Getting started: a 90-day roadmap Generative AI will reshape every industry. The organisations that build systematic capability now — rather than chasing demos — will lead their sectors in five years.

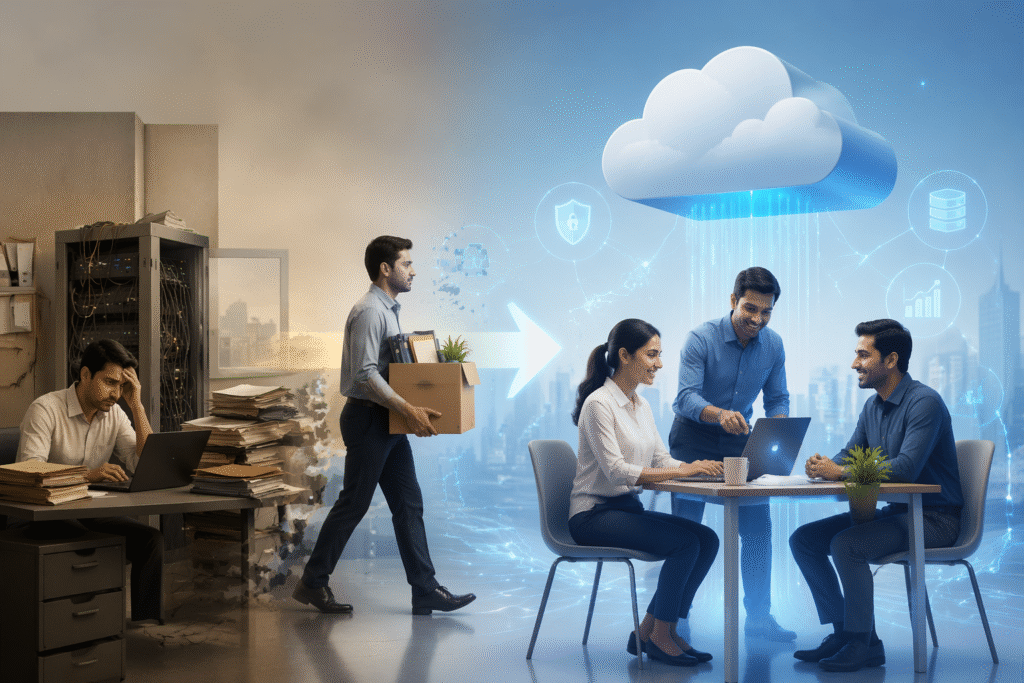

Why Indian SMEs Are Migrating to Cloud — And What They’re Getting Wrong

Cloud Computing AWS / Azure / GCP Business Strategy Cloud adoption across India’s small and medium enterprises has exploded over the last three years. From Bhubaneswar to Bengaluru, business owners are spinning up servers, subscribing to SaaS platforms, and moving spreadsheets to the cloud — often without a coherent strategy. The result? Wasted spend, security gaps, and frustrated IT teams. At DeepTechComputing, we’ve seen this pattern firsthand across more than 100 projects. Here’s what’s really happening, and how to avoid the most expensive mistakes. The rush to cloud is real — but reckless According to industry analysts, India’s cloud market is growing at over 30% annually. Yet the majority of SME migrations are lift-and-shift — simply moving on-premises VMs to cloud instances with zero architectural changes. This approach preserves all the problems of legacy infrastructure while adding a monthly cloud bill. Common red flags we encounter: The right approach: cloud-native from day one A well-architected cloud environment isn’t just about where your servers live — it’s about how your applications are designed. The AWS Well-Architected Framework and Azure’s equivalent provide excellent starting points, but applying them to an Indian SME context requires local expertise. Key principles we apply at DeepTechComputing: Multi-cloud vs single-cloud: the debate Many enterprises chase multi-cloud strategies believing it reduces vendor lock-in. For most Indian SMEs, this is premature. The operational complexity of managing AWS, Azure, and GCP simultaneously — separate IAM policies, different networking models, distinct monitoring tools — far outweighs the benefits until you’re operating at significant scale. Our recommendation: go deep on one cloud provider first. Master its networking, security, and managed services. Only introduce a second provider when you have a specific technical reason — not because a vendor salesperson recommended it. OpenStack: the option nobody talks about For organisations with strict data sovereignty requirements or predictable, high-volume workloads, OpenStack offers a compelling alternative to public cloud. DeepTechComputing is one of a handful of firms in Odisha with hands-on OpenStack deployment experience, having helped clients build private clouds that deliver public-cloud-like agility at a fraction of the long-term cost. What to do this week Cloud migration done right is one of the highest-ROI investments a growing business can make. Done wrong, it becomes a recurring drain on budget and morale. The difference lies in architectural discipline applied from the very beginning.